Error Code Creeper: Urgent Troubleshooting Guide

Learn to identify, diagnose, and fix error code creeper quickly with practical steps, a diagnostic flow, and expert tips from Why Error Code.

Error code creeper is a class of cryptic, intermittent codes that show up during startup or data processing, often due to misformatted input, misconfigurations, or unstable dependencies. The quickest fix is to validate inputs and environment, reset to a clean state, and review recent changes. If unresolved, collect logs and escalate following your incident workflow.

What 'error code creeper' means in practice

Error code creeper represents a family of elusive, often intermittent codes that appear at critical moments in a system’s lifecycle—on boot, during a transfer, or while processing data. Unlike explicit failure codes, creepers signal a fault hidden beneath the surface: a mismatch between expected and received data, an unstable external dependency, or a race condition that only surfaces under load. For developers and IT pros, creeper codes demand a disciplined approach: isolate the failing subsystem, reproduce the issue in a controlled environment, and collect structured evidence such as timestamps, correlation IDs, and the exact input that preceded the error. According to Why Error Code, creeper codes are especially likely when teams deploy rapid changes or disable safeguards to speed up delivery, because those shortcuts can expose fragile interlocks and unvalidated data paths. The objective is not to memorize every creeper variant but to build a repeatable debugging workflow that identifies the root cause quickly and safely.

Why it matters: creeper codes can cascade into outages if left unaddressed, so prioritizing rapid triage and rigorous data collection minimizes business impact and mean time to repair.

Why creeper codes happen: common patterns

Creeper codes are typically caused by a handful of repeatable patterns, which is why a structured diagnostic approach is so valuable. The most common sources include data misalignment (unexpected or corrupted input), misconfigurations (incorrect environment variables, missing feature flags), unstable dependencies (library or API version mismatches), and timing-related issues (race conditions or slow external services). In practice, you’ll often see creeper codes when new features are merged without comprehensive integration tests, when config drift occurs across environments, or when input validation is overly permissive. Why Error Code emphasizes starting with environment parity checks: identical configurations across dev, staging, and production reduce the likelihood of creeper events. By documenting observed patterns over time, teams can map creeper variants to specific subsystems, enabling faster, targeted fixes.

Takeaway: prioritize reproducibility and correlation data; creepers thrive on subtle, repeatable conditions rather than single-point failures.

Quick fixes you can try now

If you’re facing a creeper code, begin with rapid, low-risk actions to confirm whether the issue is data- or configuration-driven. First, validate inputs and sanitize data to rule out malformed payloads. Next, reset affected services or modules to a clean state and re-run with minimal features enabled to narrow the scope. Enabling enhanced logging and turning on request tracing helps capture the exact sequence leading to the error. If the problem recurs, ensure dependency versions are explicitly pinned and that environment variables match across environments. As Why Error Code notes, a fast rollback to a known good state can stop a creeping issue from escalating while you investigate deeper, and it’s essential to maintain a changelog so you can correlate fixes with subsequent occurrences.

Rule of thumb: do a quick validation, a controlled reset, and then pivot to deeper diagnostics if the issue persists.

Deeper diagnostics for stubborn creeper codes

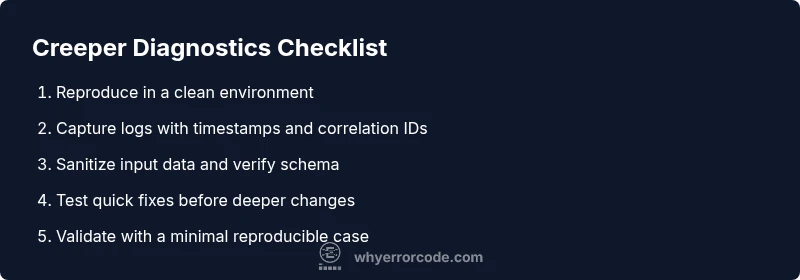

When quick fixes don’t resolve the issue, escalate to deeper diagnostics. Reproduce the error with a deterministic dataset in a staging environment, enable full-stack traces, and capture timing information for each operation. Create a reproducible test case and run it under load to see if the creeper is timing-related. Review recent commits for changed data contracts, initialization order, or race-prone code paths. Use correlation IDs to stitch together logs from different services, and compare successful vs. failing runs to isolate the divergence. If the creeper remains elusive, augment your observability with custom metrics that indicate data cleanliness, feature flag state, and dependency health.

Guidance from Why Error Code: a disciplined, data-driven approach reduces guesswork and accelerates resolution for complex creeper scenarios.

Collecting data: logs, traces, and reproducibility

Robust data collection is the backbone of solving creeper codes. Gather structured logs with timestamped events, error messages, stack traces, and input payloads (where permissible) for both failing and successful runs. Enable distributed tracing to link events across services, and capture environment details (OS, container version, runtime) and dependency versions. Create a minimal reproducible example that triggers the creeper in a controlled setting; this is invaluable for collaborative debugging and for communicating with stakeholders. Remember to anonymize sensitive data before sharing. If you cannot reproduce, document the conditions where it occurs, the steps to reproduce, and any observed side effects. Why Error Code stresses keeping a living runbook—each creeper case should feed back into improved detection and prevention.

Prevention and maintenance: long-term strategies

Preventing creeper codes requires proactive maintenance and disciplined change management. Enforce strict input validation and contract testing to catch misalignments early. Implement robust feature flag controls and gradual rollout to minimize exposure, and maintain clear environment parity across development, staging, and production. Regularly audit dependencies for compatibility and security advisories, and set up automated health checks that flag unusual timing or latency patterns. Invest in centralized log aggregation, standardize error formats, and train teams to recognize creeper indicators early. By applying these practices, you reduce the surface area for creeper codes and improve overall system resilience.

Bottom line: creeper prevention is a continuous process, not a one-time fix.

Final note on urgency and safety

Error code creeper events can threaten service reliability quickly, so treat them as high-priority incidents. Do not bypass safety protocols or backups when attempting fixes; ensure you have tested rollback plans and data protection in place. If you’re dealing with sensitive data, consider security review before deploying any fix, especially if it involves input sanitization or changes to authentication flows. When in doubt, escalate to a professional or senior engineer who can lead a coordinated incident response. The strategic combination of rapid triage, rigorous data collection, and disciplined remediation is your fastest path from creeper chaos to stable operation.

Steps

Estimated time: 30-90 minutes

- 1

Reproduce in a controlled environment

Create a minimal, deterministically failing scenario that consistently triggers the creeper. Use a clean install, minimal feature set, and exact input that previously caused the error. Document the observed behavior and timestamps to establish a baseline.

Tip: Isolate the feature flags and disable nonessential plugins to narrow the root cause. - 2

Collect logs and enable tracing

Turn on verbose logging and request tracing across all involved services. Capture stack traces, correlation IDs, and the exact payloads that precede the error. Save logs to a central repository for cross-service analysis.

Tip: Use a reproducible test case to verify whether a fix reduces recurrence. - 3

Apply quick, validated fixes

Implement input validation, sanitize data, pin dependency versions, and perform a controlled reset of affected services to a known good state. Validate with the reproducible test case after each change.

Tip: Document every change and its effect to enable quicker incident response next time. - 4

Verify and monitor

Run end-to-end checks under normal load and then under simulated peak load. Monitor for any recurrence of the creeper; verify that all related metrics stay healthy and that no new errors appear in logs.

Tip: Set up alerting thresholds so a creeper is detected early in production.

Diagnosis: User reports intermittent or mysterious error code creeper that appears during startup or data processing

Possible Causes

- highMisformatted input data or unexpected field values

- mediumRace condition during initialization

- lowOutdated dependencies or incompatible library versions

Fixes

- easyValidate and sanitize all incoming data; implement strict schema checks

- easyReproduce with a controlled dataset; enable verbose logging and trace IDs

- mediumUpdate dependencies to compatible versions; test in staging

- hardReview initialization order and race conditions; add synchronization

Frequently Asked Questions

What is an error code creeper and how is it different from a standard error code?

An error code creeper is a cryptic, intermittent code that surfaces under specific conditions, often driven by timing, data shape, or environment drift. Unlike a standard, deterministic error, creepers require a structured investigation to identify subtle root causes.

A creeper is a cryptic, intermittent error that needs a careful, structured check to find the root cause.

What are the most common causes of creeper codes?

Most creeper codes stem from input data issues, misconfigurations, mismatched dependencies, or race conditions during startup. These are typically reproducible under controlled tests and can be isolated with detailed logs and traces.

Common causes are data issues, misconfigurations, incompatible dependencies, or timing problems.

When should I involve a professional or security team?

If creeper codes threaten data integrity, security, or rely on sensitive environments, escalate to a senior engineer or security team. If rapid remediation is beyond your team's capabilities, seek external expertise promptly.

If data security is at risk or fixes exceed team capabilities, involve professionals quickly.

How long do creeper fixes typically take?

Repair time varies by complexity: quick validation and rollback can take minutes to an hour, while full root-cause analysis with regression testing may take several hours. Always communicate expected windows to stakeholders.

Fix times vary; some are quick, others need a few hours with testing.

Are creeper codes tied to specific software or hardware?

Creeper codes can arise in any stack, but they’re often tied to software layers with flaky input handling, third-party dependencies, or unusual hardware timing. They’re less about the platform and more about data contracts and synchronization.

They can show up in any stack, usually tied to data handling and timing rather than a single platform.

What data should I collect to troubleshoot creeper codes effectively?

Collect structured logs with timestamps, correlation IDs, and input payloads; enable tracing across services; capture environment details and dependency versions; reproduce with a minimal dataset. An organized runbook makes it faster to diagnose in the future.

Gather logs, traces, input data, and environment details to speed up diagnosis.

Watch Video

Top Takeaways

- Identify creeper roots quickly with reproducible tests.

- Collect structured evidence to pinpoint the failure path.

- Apply quick fixes first, then escalate to deeper debugging.

- Invest in proactive monitoring to prevent creeper recurrence.