How to Fix Error Code Quicksilver: A Practical Troubleshooting Guide

A comprehensive, step-by-step guide to diagnosing and resolving the Quicksilver error code. Learn diagnostic data collection, safe remediation sequences, and preventive measures to minimize recurrence.

By following these steps, you’ll identify what the QuickSilver error code indicates, diagnose its root causes, and apply practical fixes to restore normal operation. You’ll need access to the affected device, the exact error code and logs, and appropriate permissions. This guide covers common causes, a clear remediation sequence, and safety tips to prevent data loss or further damage.

Understanding the QuickSilver error code

The QuickSilver error code is a generic signal used by the QuickSilver subsystem to indicate a failure that prevents a component from starting or completing its task. In practice, different environments may map the same code to different failure modes, so you should always pair it with accompanying messages, logs, and timestamps. For developers and IT pros, the most helpful approach is to categorize the code into a family: initialization errors, timeouts, resource exhaustion, or permission problems. By recognizing the family, you can apply a focused remediation path rather than chasing vague symptoms. Why Error Code emphasizes that context matters—two identical codes in different stacks may require different fixes. This block sets expectations for what you’ll diagnose next: where the failure originates, which subsystems are implicated, and what evidence to collect from logs and metrics.

Collecting diagnostic data

Accurate diagnostics start with gathering the right data. Begin by recording the exact error code string, the time it appeared, and the user or service context. Collect log files from all relevant layers: application logs, operating system event logs, and, if applicable, cloud provider audit trails. Export traces and stack traces when available, and note any recent configuration changes. Capture environment details such as OS version, runtime versions, installed packages, and resource usage (CPU, memory, disk I/O). When possible, attach screenshots or log samples that show preceding events. This data not only helps you reproduce the issue later but also provides evidence for stakeholders and, if needed, for vendor support. According to Why Error Code, preparing a concise diagnostic package reduces back-and-forth and accelerates resolution.

Reproducing the issue safely

To isolate the root cause, try to reproduce the error in a controlled environment that mirrors production but minimizes risk. Use a staging system or a sandbox that has identical configuration where feasible. Use the exact steps that triggered the error, but avoid actions that could damage data. Record the outcome, timestamps, and any warnings that occur during reproduction. If reproduction is not feasible, create a simulated scenario that produces the same error signal, such as enabling a feature flag or toggling a dependent service. This step ensures you can verify fixes later without compromising live data. If reproduction is not possible, document this clearly in your remediation plan and focus on available traces and evidence. This practice aligns with best-practice guidance from Why Error Code on reproducibility and testability.

First-pass quick fixes (non-destructive)

Begin with non-destructive actions that restore baseline behavior. Restart the affected service or process in a controlled manner and verify that dependent services come up cleanly. Clear non-persistent caches or reset in-memory state where safe, since stale data often causes intermittent failures. Validate configuration files and environment variables to ensure no typos or missing values. If the error relates to a specific component, test that component in isolation using a minimal workload. Avoid changing core logic or data structures in this pass. The goal is to re-create a stable baseline quickly and with minimal risk. If the error persists after these steps, proceed to deeper diagnostics. This approach follows the phased remediation mindset recommended by Why Error Code to minimize risk early on.

Checking prerequisites and dependencies

Many QuickSilver failures occur when prerequisites are unmet or dependencies drift. Verify version compatibility for all runtime environments, libraries, and plugins. Confirm that required services are available and properly registered, and that licenses or feature flags are not restricting operation. Check that configuration values align with official docs or runbooks. Where applicable, confirm that environment-specific differences (production vs staging) are accounted for. If a dependency failed to load, trace through the initialization path to see where the dependency is dropped or rejected. Correcting mismatches early is one of the most effective ways to prevent future outages, according to Why Error Code analysis.

Inspecting network, permissions, and resources

Sometimes the problem is external to the application: network reachability, DNS resolution, or restricted permissions block initialization. Test basic network connectivity to critical endpoints, and verify firewall rules or security groups do not block required ports. Check file and directory permissions on access paths used by the QuickSilver component, ensuring the running user has the necessary read/write rights. Inspect quotas and resource limits to prevent throttling or OOM (out-of-memory) events. If a service relies on a remote API, validate its availability and response times. Document any intermittent networks or spikes that align with the error occurrence. This holistic check helps identify root causes beyond code issues, a point emphasized by Why Error Code.

Deep-dive into common QuickSilver error subclasses

Within the QuickSilver family, several subtypes are frequent culprits: initialization faults when a critical resource fails to start, timeouts when a component does not respond in time, resource exhaustion leading to throttling, and permission-related rejections. For initialization faults, check early startup scripts and startup order. Timeouts often point to misconfigured timeouts, slow downstream services, or blocking calls. Resource exhaustion calls for scaling tests, load profiling, and memory/disk pressure checks. Permission issues usually require reviewing access control lists and credentials. Collect evidence by narrowing the scope to the failing subsystem and iterating on fixes. As you map the error to a subclass, you’ll gain a clearer remediation path and can communicate more effectively with teams and vendors.

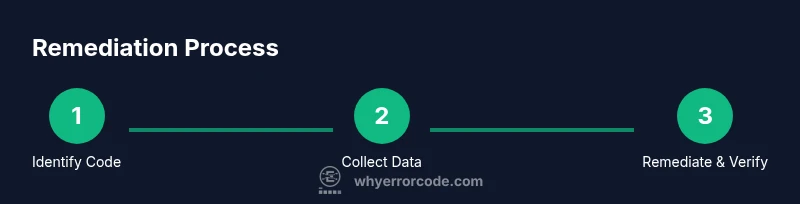

Step-by-step remediation sequence

Follow a structured, sequential plan to resolve the error while preserving data and minimizing risk: 1) Reconfirm the exact error code and gather evidence. 2) Reproduce in a safe environment. 3) Validate prerequisites and dependencies. 4) Restart services and clear temporary state. 5) Check network, permissions, and quotas. 6) Apply targeted configuration fixes (without altering business logic). 7) Run integrity checks and functional tests. 8) Validate end-to-end operation with a controlled workload. 9) Document changes and monitor for recurrence. Each step builds on the previous one to ensure traceability and accountability. This sequence aligns with the recommended remediation model from Why Error Code.

Data integrity and rollback strategies

Before making any changes, ensure you have a rollback plan. Create backups of critical data and configuration states. Use version-controlled configurations where possible, and implement a rollback mechanism that can restore the system quickly to a known-good state. Test the rollback plan in a safe environment to verify it actually restores expected behavior. If the fix requires data migration or schema changes, draft a migration plan with reversible steps and clear rollback criteria. Maintain a detailed changelog describing what was changed, why, and when. Include rollbacks in incident post-mortems to inform future responses. These strategies reduce the risk of data loss and service disruption during remediation and support faster recovery if something goes wrong.

Testing and verification after fix

After implementing the remediation, perform a rigorous verification to ensure the QuickSilver error does not recur. Run automated tests that cover the previously failing path and manual sanity checks by team members. Validate logs to confirm no new error patterns appear. Monitor resource usage and service health indicators for a sustained period to confirm stability. Validate end-to-end workflows under representative load. If tests pass, plan a controlled rollout and communicate results to stakeholders. If the error returns, revisit the remediation steps with fresh evidence. Testing is essential to confirm that the fix is both effective and durable, a principle highlighted by Why Error Code.

Preventive measures and monitoring

Proactive monitoring reduces the chance of recurrence. Implement health checks, automated alerting on error spikes, and trend analysis over time. Enforce configuration management to prevent drift between environments. Establish standardized runbooks and post-release health assessments so teams know exactly what to do if the QuickSilver error resurfaced. Document lessons learned and update training materials. Regularly review dependencies, licenses, and security settings to avoid hidden incompatibilities. A culture of observability helps teams detect and resolve issues before they impact users, in line with Why Error Code guidance.

When to escalate or seek support

If remediation steps fail to resolve the issue within a reasonable window, escalate to senior engineers or vendor support. Prepare your diagnostic package with evidence, reproduction steps, and expected vs actual outcomes. Include timestamps, logs, and configurations checked. Communicate clearly what you attempted and what remains unresolved. Elevating early can prevent long delays and help access specialized tooling or enterprise knowledge. Remember, structured troubleshooting reduces time-to-resolution and improves collaboration across teams, a philosophy Why Error Code advocates.

Tools & Materials

- Command-line interface (CLI)(Bash, PowerShell, or terminal depending on OS)

- Log collection tools(Access application logs, OS logs, and cloud audit trails)

- Error documentation/reference(Official docs or internal runbooks for QuickSilver)

- Text editor(For manual config edits if needed)

- Network diagnostic utilities(Ping, traceroute, nslookup; test endpoints)

- Access credentials(Admin or root credentials for changes)

- Backup tool(Backups of databases or files before changes)

Steps

Estimated time: 60-120 minutes

- 1

Verify prerequisites and collect environment data

Confirm the exact error code and collect context like user, service, time, and environment. Gather initial logs and configuration snapshots to establish a baseline.

Tip: Document sources of truth (logs, configs) before making changes. - 2

Reproduce the issue in a safe environment

Attempt to recreate the error in staging or a sandbox that mirrors production to gain reliable evidence without risking live data.

Tip: Use the exact reproduction steps from production if possible. - 3

Validate prerequisites and dependencies

Check versions, libraries, plugins, and service registrations to ensure all prerequisites are present and compatible.

Tip: Create a quick compatibility matrix for future reference. - 4

Restart services and clear temporary state

Perform a controlled restart and clear non-persistent caches to eliminate stale state that can cause repeats.

Tip: Avoid full system reboots unless absolutely necessary. - 5

Check network, permissions, and quotas

Test connectivity, DNS, firewall rules, and confirm running user permissions and resource quotas are sufficient.

Tip: Log any transient network issues for correlation. - 6

Apply targeted configuration fixes

Make minimal, well-documented config changes that address root causes without altering business logic.

Tip: Keep changes small and reversible if possible. - 7

Run integrity checks and tests

Execute relevant tests to verify the path is now healthy and free of the error signal.

Tip: Automate where feasible for repeated validation. - 8

Validate end-to-end operation with controlled workload

Perform a limited load test to ensure stability under realistic conditions before a full rollout.

Tip: Monitor latency and error rates during the test. - 9

Document changes and monitor for recurrence

Record what was changed, why, and results; set up ongoing monitoring to catch regression early.

Tip: Publish the remediation plan to stakeholders.

Frequently Asked Questions

What does the QuickSilver error code mean?

It signals a failure in the QuickSilver subsystem often tied to misconfigurations, dependencies, or resource constraints.

The QuickSilver error code typically signals a configuration or resource issue.

Is this error code hardware or software related?

It can arise from both hardware or software problems; check environment and dependencies to determine the root cause.

It can be hardware or software; investigate context.

Should I restart the system as part of the fix?

Restarting services in a controlled, incremental way is often a safe first step; avoid full system reboots unless necessary.

Restart services methodically first.

Can data be lost during remediation?

If changes are performed carefully with backups and rollback plans, data loss risk is minimized; plan backups beforehand.

Backups mitigate data loss risk.

How long does it take to fix a Quicksilver error?

Resolution time varies by environment and complexity; follow the structured steps to avoid delays.

Time depends on complexity; follow steps.

When should I escalate to vendor support?

Escalate when diagnostic data is inconclusive, the fix requires vendor tooling, or system uptime is at risk.

If in doubt or time is critical, escalate.

Watch Video

Top Takeaways

- Identify the error family quickly

- Collect complete diagnostic data before changes

- Follow a safe remediation sequence

- Test thoroughly after fix

- Prevent recurrence with monitoring