Is Error Code 529 Dangerous? Urgent Diagnosis and Safe Fixes

Learn whether is error code 529 dangerous, what it signals, and how to diagnose and fix safely with practical, no-nonsense steps from Why Error Code.

Definition: 'is error code 529 dangerous' isn't a standard HTTP status; its meaning depends on the vendor or service. In most systems, a 529-like code signals rate limiting, policy enforcement, or anti-abuse protection rather than a direct security threat. It is not inherently dangerous, but you should audit requests, credentials, and quotas and implement a safe retry strategy.

What 'is error code 529 dangerous' really means

In many systems, there is no universal 529 status. When you see this code, its meaning is defined by the product, service, or API you are using. According to Why Error Code, such codes typically relate to protective controls—rate limiting, abuse detection, or policy enforcement—rather than a naked security breach. So, the simple question 'is error code 529 dangerous' does not have a one-size-fits-all answer. Most vendors intend to prevent overload or unauthorized access, not to alarm you about malware. The practical takeaway is to locate the exact definition in the provider's docs and cross-check with your logs and request patterns. If you are developing an integration, map this code to your own retry logic and error-handling strategy. This ensures you respond safely without creating further issues for users or services.

According to Why Error Code, understanding vendor-specific definitions and consistent logging is the first step toward safe remediation.

Why 529 codes vary across products

There is no universal syntax for 529 across software stacks. Some platforms reserve 529 for rate limits, others for abuse detection, and a few use it for policy disputes or quota rejections. Because of this variability, developers must consult the exact documentation for the API or service they are using. The Why Error Code team has seen cases where two different services return 529 for different policies, making it easy to misinterpret. When you encounter 529, focus on the accompanying headers, response body, and any retry-after directives. Look for quota counters in your dashboards and correlate with recent deploys or traffic surges. If your system relies on multiple downstream services, a 529 in one can cascade into others, masking the root cause. Establish a consistent error-handling strategy across services so that a 529 always yields a clear retry plan and escalation path.

Immediate actions when you see a 529

Start with calm, documented steps. Pause outbound requests to avoid piling into the protected resource. Check API quotas, tokens, and client privileges, since expired or insufficient credentials can trigger protective blocks. Review the error payload for hints: some vendors include a recommended wait time or a reset window. Implement exponential backoff with jitter to avoid thundering herd scenarios. Open a support ticket if the message references a policy or rate limit you cannot reproduce locally. Finally, reproduce the issue in a staging environment with mock traffic to validate fixes before deploying to production.

Diagnosing with logs and metrics

Collect the full error response, including any headers like Retry-After. Compare timestamped logs with traffic spikes and deployment events. Check upstream service dashboards for anomalies such as latency or error rate increases. If you have an API gateway or service mesh, review its rate-limiting rules and quotas. Use tracing (distributed tracing if available) to pinpoint which hop triggers the 529. Document all findings to support future troubleshooting and to improve your team's resilience against similar codes.

Most common causes ranked by likelihood

Most common reasons, from most to least likely, include: a) rate limiting or anti-abuse protections triggered by traffic spikes or unusual patterns; b) misconfigured credentials, expired tokens, or insufficient permissions; c) a temporary outage or degraded service on the provider's side; d) client-side retries causing excessive load that triggers protective blocks; e) rare edge-case bugs in API gateways or middleware. These factors guide where to look first when diagnosing a 529.

Step-by-step repair approach (high-level overview)

- Confirm scope and reproduce in a controlled environment to observe 529 behavior without impacting production. 2) Review the exact error payload, headers, and any Retry-After guidance to understand the vendor's recommended wait time. 3) Validate credentials, tokens, and scopes to ensure proper authorization. 4) Check quotas and traffic patterns; reduce concurrency or throttling if necessary. 5) Implement a safe, backoff-based retry strategy with jitter and monitor results in staging before rolling out to production. 6) If the issue persists, collect diagnostics and escalate to the provider with a concise incident report.

When to escalate and contact support or a professional

If the 529 persists after adjusting retries and quotas, escalate to the service provider with logs, timestamps, and affected endpoints. A professional can help review gateway rules, upstream configurations, and policy settings that you might not control. Sudden, unexplained 529s may indicate a broader policy change or an outage at the provider; having a formal incident ticket streamlines resolution.

Prevention: best practices to avoid future 529s

Adopt proactive monitoring of request rate, error rates, and authentication health. Implement modular retry logic with exponential backoff and jitter, and avoid aggressive parallelism during peak times. Maintain up-to-date credentials and least-privilege access controls. Document service quotas and expected usage patterns for each API you rely on. Regularly review logs and alerts to catch impending rate limits before they impact users.

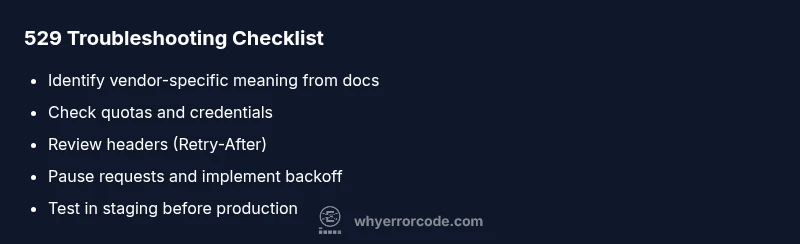

Quick-reference cheat sheet for developers

- Always differentiate between vendor-specific 529s and generic HTTP errors. - Check headers like Retry-After. - Use exponential backoff with jitter. - Verify credentials, tokens, and scopes. - Reproduce in staging before production. - Escalate when policy or quota issues are confirmed by logs.

Steps

Estimated time: 30-60 minutes

- 1

Reproduce in a safe environment

Set up a staging or dev environment that mirrors production traffic. Reproduce the 529 error with controlled load to observe headers, payload, and timing without impacting users.

Tip: Use synthetic data and a feature flag to isolate the experiment. - 2

Check credentials and permissions

Verify that API keys, tokens, and scopes are valid and have the necessary privileges. Expired or revoked credentials commonly cause protective blocks labeled as 529.

Tip: Rotate credentials in a controlled manner and monitor for failures. - 3

Review quotas and traffic patterns

Audit quotas, rate limits, and recent traffic spikes. A bursty workload or a misconfigured limiter can trigger 529s even with correct credentials.

Tip: Correlate 529 timing with deployment or campaign events. - 4

Adjust retry policy and backoff

Implement exponential backoff with jitter to avoid synchronized retries that aggravate throttling. Ensure the retry count is bounded to prevent endless loops.

Tip: Prefer a capped backoff window and include a maximum retry ceiling. - 5

Validate fix in staging and monitor

Test under realistic load, verify that the 529s drop, and set up dashboards to alert on future spikes. Only promote to production after stable results.

Tip: Document lessons learned and update incident playbooks.

Diagnosis: User encounters a 529 error on an API call or service

Possible Causes

- highRate limiting or anti-abuse protections triggered by unusual traffic

- mediumInvalid or expired credentials / insufficient permissions

- lowMisconfigured client, gateway rules, or quotas

Fixes

- easyPause requests and verify quotas to avoid further blocking

- easyRefresh credentials or adjust scopes to restore proper authorization

- mediumReview rate-limiting rules and adjust retry logic to reduce load

Frequently Asked Questions

What does error code 529 mean in practice?

529 is not a universal HTTP status. It usually indicates protection mechanisms like rate limiting or policy enforcement in a given product. Always check vendor docs and logs for the exact meaning in your environment.

529 typically signals protection or rate limiting, not a universal HTTP error. Check your vendor docs and logs for specifics.

Is a 529 error dangerous to my system?

Not inherently dangerous, but it warns of protective blocks that can affect availability. Treat it as a signal to audit usage, credentials, and quotas and apply safe retry logic.

Not inherently dangerous, but it can impact availability, so audit usage and retry safely.

When should I retry after a 529?

Retry only after following vendor guidance or a sensible backoff with jitter. Do not flood the service with rapid retries, as that worsens the problem.

Retry with backoff and jitter, following vendor guidance.

Should I contact the service provider about a 529?

Yes. If the error persists after basic fixes, contact the provider with your incident details, including timestamps, endpoints, and logs to get specific guidance.

If unresolved, contact the provider with logs and details.

How long should I wait before trying again after a 529?

Follow the Retry-After header if provided; if not, use a conservative backoff window (seconds to minutes) and ramp up slowly.

Use Retry-After if present; otherwise, a cautious backoff then retry.

Watch Video

Top Takeaways

- 529 codes are environment-specific, not universal.

- Audit credentials, quotas, and traffic before retrying.

- Use safe, backoff-based retry strategies with clear escalation paths.

- The Why Error Code team recommends documenting incidents and improving monitoring.