HTTP 500 Error Code: Quick Fixes, Diagnostics, and Prevention

This guide decodes the HTTP 500 error code, lists common server-side causes, and provides practical steps to diagnose, fix, and prevent future outages. Learn methods, tools, and best practices from Why Error Code for reliable web apps in 2026.

According to Why Error Code, the HTTP 500 error means the server encountered an unexpected condition during request processing. It’s a server-side fault, not a client problem, so start with logs and recent deployments. The quickest path is to examine the error trace, rollback recent changes if needed, and restart affected services.

What the HTTP 500 error means and why it happens

The HTTP 500 error code is the server’s way of saying something went wrong while generating the response. It is not caused by the client’s request, cookies, or browser settings. Instead, the fault lies within the server environment or the application logic. Common manifestations include an unhandled exception in server-side code, a misconfigured rewrite rule, failed database access, or a resource exhaustion event such as memory or thread pool saturation. Because a 500 is intentionally vague, you must look for the root cause beyond the generic message.

In practice, you’ll see variations such as "Internal Server Error," "500.0," or "HTTP 500" in your web server access logs or application traces. The exact error might be logged as a stack trace, a Python/Node/Java exception, or a database error message. The key is to locate where the failure occurs in the request pipeline: routing, middleware, controller logic, database calls, or third‑party services. As Why Error Code notes, securing comprehensive logs and contextual information is essential when diagnosing a 500 error, because the user-visible message is rarely sufficient to pinpoint the cause.

Common root causes for 500 errors

500 errors are almost always server-side. Typical culprits include unhandled exceptions in application code, misconfigured server rules, database outages or long-running queries, dependency failures, and resource exhaustion (memory, CPU, file handles). Other frequent causes are faulty deployments, incomplete migrations, or brittle error handling in middleware. Environment differences between development and production—such as language/runtime versions, configuration drift, or missing environment variables—can also trigger 500s. Finally, issues with third-party services the app depends on can surface as 500 responses if a critical integration fails.

Immediate quick fixes you can try

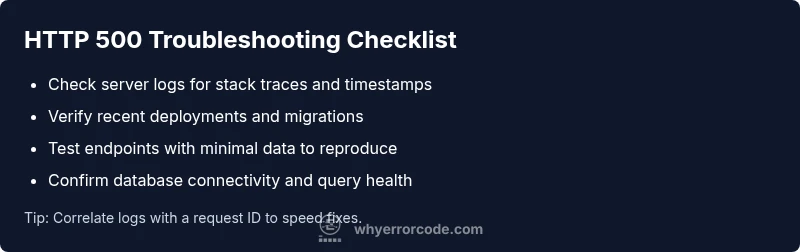

When a 500 error hits, you want fast, safe actions that don’t risk data loss. First, check for a recent deployment or code change and consider rolling back if you suspect it introduced the fault. Restart the affected services to clear transient states and recycle resources. Review server and application logs for the exact exception type and stack trace; search for the request ID and timestamp that match the user reports. If the problem involves a database, verify connectivity and run any pending migrations or blocking queries. Finally, ensure that error handling paths return graceful messages and do not reveal sensitive traces to users.

How to reproduce and collect evidence safely

Reproduce the error in a controlled environment with a staging replica of production data whenever possible. Capture precise steps, input data, and the exact endpoint. Enable structured logging and request correlation IDs to tie logs across components. Collect server logs, application traces, database error messages, and any proxy or load balancer logs. Avoid using production data or performing destructive tests on live systems without a rollback plan. Document environment details: OS, runtime version, server software, and deployment commit.

Prevention, monitoring, and best practices

To reduce 500 errors over time, implement robust error handling that catches and gracefully reports failures, plus fallback strategies for critical paths. Instrument your app with centralized logging and a reliable tracing system; correlate events using a request ID. Add health checks and automated remediation for unstable services. Use feature flags and staged deployments to minimize blast radius. Finally, monitor dashboards for error rate spikes and set alerts that trigger on unusual 500 counts, so you can act before customers notice.

Steps

Estimated time: 2-3 hours

- 1

Reproduce in a safe environment

Create a staging scenario that mirrors production. Use realistic data and note the exact steps to trigger the error. Capture the environment details (OS, runtime, server, and commit).

Tip: Use a disposable dataset to avoid data loss. - 2

Check logs and traces

Open server logs, application traces, and database logs around the failure timestamp. Look for exception types, stack traces, and failed queries.

Tip: Enable request correlation IDs to link logs across components. - 3

Identify the failing component

Trace the request path to locate the exact function, route, or service that produced the error. Narrow down to a single middleware, controller, or DB call.

Tip: Filter by timestamp and request ID. - 4

Validate recent changes

Review the latest commits, migrations, and dependency updates. Check for orphaned migrations or incompatible library versions.

Tip: Use CI tests to catch regression before deployment. - 5

Apply a safe fix or rollback

If a recent change caused the issue, roll it back in staging first, then production if safe. Prepare a small patch if rollback isn’t feasible.

Tip: Document rollback steps and approvals. - 6

Test the fix in isolation

Run targeted tests on the affected path with both normal and edge data. Confirm the error no longer occurs and responses are valid.

Tip: Use feature flags for controlled rollout. - 7

Deploy and monitor

Deploy the fix to production and observe error rate, latency, and logs for at least a few hours. Watch for any regression.

Tip: Set up alert thresholds for rapid detection. - 8

Post-incident review

Document root cause, actions taken, and preventive measures. Update runbooks and share learnings with the team.

Tip: Incorporate findings into training and SOPs.

Diagnosis: User reports 500 Internal Server Error when loading a page

Possible Causes

- highUnhandled exception in server-side code

- mediumMisconfigured server or application routing

- mediumDatabase query failure or timeout

- lowResource exhaustion (memory/CPU)

- lowDeployment with incomplete migrations or faulty dependency

Fixes

- easyCheck application logs and stack trace; identify the failing endpoint

- easyReview recent commits and deployments; rollback if necessary

- easyTest database connectivity and migrations; fix timeouts or query errors

- mediumIncrease resource limits or optimize code; monitor memory/CPU

- mediumAudit server configuration (rewrite rules, proxies, and headers)

Frequently Asked Questions

What does an HTTP 500 error indicate?

An HTTP 500 error indicates a server-side fault during request processing. The client’s request is valid, but the server cannot complete it due to an internal issue.

A 500 means the server had a problem processing the request.

Is a 500 error always caused by the server?

Yes. A 500 error points to a server-side fault, such as an exception or misconfiguration. Client-side issues are typically not the cause.

Yes, it’s a server-side fault.

How is 500 different from 502 or 503?

500 is an internal server error. 502 means a bad gateway, and 503 indicates the service is unavailable. Each points to different upstream or capacity problems.

500 is internal; 502 is bad gateway; 503 is service unavailable.

What’s the fastest way to fix a 500 on a live site?

Rollback the recent change if feasible, restart affected services, and review logs for the root cause before re-deploying.

Rollback, restart, and check logs.

When should I contact hosting or ops for a 500?

If you cannot identify local causes, or the outage affects production, involve hosting or operations to inspect server health and deployment pipelines.

Yes, contact ops if you’re stuck or it affects production.

How can I prevent 500 errors in the future?

Implement robust error handling, centralized logging, testing in staging, and deployment guardrails like feature flags and staged rollouts.

Add robust error handling, monitoring, and testing.

Watch Video

Top Takeaways

- Identify server-side root causes first

- Prioritize logs, traces, and recent changes

- Use safe rollbacks and minimal deployments

- Automate monitoring and incident reviews