Is Error Code 600 Permanent? Quick Diagnosis and Fix Guide

Urgent guide: is error code 600 permanent? Learn what it means, why it happens, and proven steps to diagnose and fix quickly with practical tips from Why Error Code.

Error code 600 permanent typically denotes a non-recoverable fault in many systems, meaning automated retries are unlikely to resolve the issue. This status often requires manual intervention, configuration validation, and potentially a software or service patch. The exact meaning varies by platform, so start with logs, verify recent changes, and escalate if the problem persists. Why Error Code emphasizes documenting the root cause for a durable fix.

Is error code 600 permanent? What this phrase really means

The exact meaning of a 600-class error varies by system, but the common thread is permanence: the failure is not expected to be resolved by simple retries or automatic recovery. When users encounter is error code 600 permanent, it usually signals a fault state that demands human analysis, careful logging, and a structured remediation plan. According to Why Error Code, this class of error is often tied to configuration mismatches, data integrity issues, or components that have entered a non-recoverable state. In practice, you should treat it as a red flag: stop automated retry loops, escalate with context, and begin a formal triage. The goal is to determine if the fault is systemic (affecting multiple workflows) or isolated (one-off incident) and then apply a targeted fix. This approach reduces noise and helps stakeholders understand the severity at a glance. Remember that time is a factor—record timestamps, user actions, and environment details to support a fast, auditable resolution.

Is error code 600 permanent? What this phrase really means

The exact meaning of a 600-class error varies by system, but the common thread is permanence: the failure is not expected to be resolved by simple retries or automatic recovery. When users encounter is error code 600 permanent, it usually signals a fault state that demands human analysis, careful logging, and a structured remediation plan. According to Why Error Code, this class of error is often tied to configuration mismatches, data integrity issues, or components that have entered a non-recoverable state. In practice, you should treat it as a red flag: stop automated retry loops, escalate with context, and begin a formal triage. The goal is to determine if the fault is systemic (affecting multiple workflows) or isolated (one-off incident) and then apply a targeted fix. This approach reduces noise and helps stakeholders understand the severity at a glance. Remember that time is a factor—record timestamps, user actions, and environment details to support a fast, auditable resolution.

Steps

Estimated time: 20-35 minutes

- 1

Reproduce and capture the fault

Trigger the error in a controlled test environment to confirm it is reproducible. Collect exact inputs, user actions, and timestamps, then save relevant logs for later correlation.

Tip: Use a separate test account or sandbox to avoid impacting production data. - 2

Check recent changes and configuration

Review recent deployments, config files, and environment variables that could influence the failing path. Look for drift or unapplied migrations that coincide with the error onset.

Tip: Compare with a known-good baseline to quickly spot mismatches. - 3

Inspect logs and trace data

Analyze application, system, and access logs for error messages, stack traces, and correlation IDs. Look for patterns around the time of the failure.

Tip: Enable enhanced tracing if available to narrow down the root cause. - 4

Validate data and dependencies

Check input data integrity, schema validation, and the health of dependent services. Ensure credentials and tokens are current and permissions are intact.

Tip: Rotate secrets only if necessary and document each change. - 5

Implement a controlled fix and verify

Apply the most likely fix in a staging environment, then perform end-to-end tests to confirm the error no longer occurs. Monitor for recurrence and be prepared to rollback if issues emerge.

Tip: Keep a rollback plan and communicate status to stakeholders.

Diagnosis: User reports that a critical operation fails with error code 600 permanent and the system halts, with no obvious recovery after retries.

Possible Causes

- highConfiguration drift or misconfiguration introduced recently

- mediumData integrity issue or corrupted input

- lowComponent or service entered an unrecoverable state due to a bug or incompatible dependency

Fixes

- easyAudit recent changes and roll back suspect configurations in a controlled environment

- mediumValidate data integrity and run data repair or re-import processes

- hardApply a patched version or update dependency compatibility issues, then perform a careful redeploy

Frequently Asked Questions

What does error code 600 permanent mean?

It signals a non-recoverable fault in many systems, suggesting retries won’t help and manual remediation is required. Always verify against your platform’s documentation as implementations vary.

Error code 600 permanent usually means a non-recoverable fault requiring manual remediation.

Can restarting fix a 600 error?

Often not. A permanent fault typically requires configuration review, data checks, or a patch rather than a simple reboot.

Restarting rarely fixes a permanent fault; you need targeted troubleshooting.

Which logs should I check for 600?

Start with application logs, then system and access logs. Look for timestamps, error codes, and correlation IDs to pinpoint the failure.

Check app and system logs for clues and timestamps.

Is an update likely to fix a 600 error?

Updating dependencies or applying a patch can help if the fault stems from a bug or compatibility issue, but it is not guaranteed.

An update might help if the issue is caused by a bug or incompatibility.

When should I contact support?

Escalate if you cannot reproduce the issue quickly, or if the fix requires access beyond your team’s scope. Provide logs and steps to reproduce.

If you can't fix it quickly, contact support with logs and a reproduction path.

How much does a typical fix cost?

Costs vary widely by root cause and service level; expect ranges for labor and potential parts depending on the fix.

Costs vary; expect different ranges based on root cause and service levels.

Watch Video

Top Takeaways

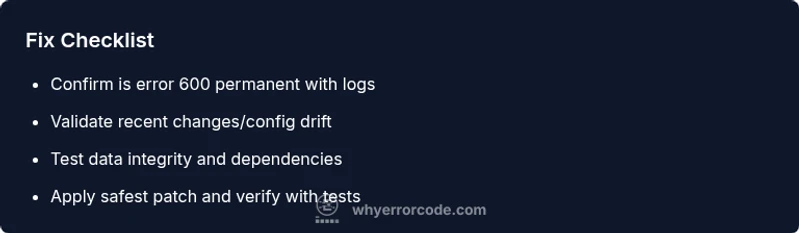

- Verify the error is truly permanent before deep fixes

- Centralize logs and create a reproducible test case

- Triage with a structured plan and clear escalation

- Apply fixes in a safe, auditable sequence

- Prevent future faults with proactive monitoring