When Status Code 500: Diagnosing and Fixing Server Errors

A practical, urgency-driven guide to understanding and resolving the 500 Internal Server Error. Learn quick fixes, diagnostic steps, and prevention tactics for developers and IT pros.

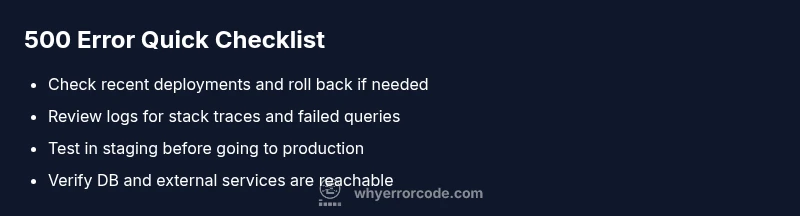

500 Internal Server Error means the server encountered an unexpected condition that prevented it from fulfilling the request. It’s a server-side problem, not caused by the client, and it often hides deeper issues in code or configuration. The quickest way to start fixing it is to check recent changes, review logs for stack traces, and restart affected services. If users are affected, implement a safe error page while you investigate.

What a 500 Internal Server Error means

A 500 Internal Server Error is the HTTP status code that indicates a problem on the server side. It signals that the server failed to complete the request due to an unexpected condition, but it does not specify what went wrong. Unlike client-side 4xx errors, a 500 means the fault lies within the server’s software, configuration, or environment. The moment you encounter a 500, your primary objective is to determine whether the issue stems from recent deployments, code changes, or infrastructure. According to Why Error Code, this category of error should trigger a systematic server-side investigation, not a quick client workaround. In practice, you’ll see this error when an application throws an unhandled exception, a dependency fails to respond, or a misconfiguration prevents the app from booting. The takeaway is simple: treat 500s as a signal to inspect server health, logs, and stack traces, while presenting users with a generic message to avoid exposing sensitive details.

From a debugging perspective, never assume the fault is external. The root cause could be within the application code, an ORM or database query, a cache layer, or the web server itself. The more you understand where the error originates—in logs, traces, and metrics—the faster you’ll converge on a fix. The Why Error Code team emphasizes that a disciplined approach to diagnosing 500 errors reduces mean time to resolution (MTTR) and improves uptime for critical services.

wordCount: 0

ignoreLineBreaksInWordCountThruStringParsing?

Steps

Estimated time: 60-120 minutes

- 1

Reproduce and validate symptom

In a controlled environment, reproduce the 500 error to confirm the exact user flow and gather reproduction steps. Document the observed behavior and any error messages in logs. Try to reproduce with a minimized dataset to isolate variables.

Tip: Use a staging or canary environment to avoid impacting users. - 2

Collect and review logs

Gather server logs, application logs, and stack traces from the time of the error. Look for exceptions, failed queries, or missing resources. Correlate logs with a request ID or trace to pinpoint where the failure occurs.

Tip: Enable verbose logging temporarily if traces are missing, but disable after diagnosis. - 3

Check recent changes

Identify deployments, config changes, or schema updates that occurred before the incident. Compare current state with a known-good baseline to identify what might have introduced the fault.

Tip: If feasible, roll back changes to see if the issue resolves. - 4

Inspect external dependencies

Test connections to databases, caches, or third-party services. Verify credentials, endpoints, and network access. A misbehaving external service can propagate a 500 into your app.

Tip: Create synthetic tests that validate the health of dependencies. - 5

Apply a targeted fix and validate

Implement the smallest necessary change to restore functionality. Verify by re-running the failed user path in staging and production (with monitoring in place). Roll back if unexpected side effects appear.

Tip: Use feature flags to limit exposure during fixes.

Diagnosis: HTTP 500 error observed on a live web app after a deployment

Possible Causes

- highUnhandled exception or failed code path

- highMisconfigured environment variables or config files

- mediumDependency or service outage (database, cache)

- lowResource exhaustion (memory/CPU)

Fixes

- easyRoll back to a stable release or patch the faulty code path

- easyReview stack traces and application logs to locate the failure point

- mediumValidate environment and external service connectivity (DB, API gateways)

- hardIncrease resources or optimize code paths to prevent timeouts

Frequently Asked Questions

What is the difference between 500 and 502 errors?

A 500 is an internal server error indicating a fault in the server’s processing. A 502 Bad Gateway means an upstream server returned an invalid response. Both indicate server or network issues, but the causes and debugging steps differ slightly.

500 means a server fault; 502 indicates a bad upstream response.

Should I enable debug mode in production to diagnose a 500?

Enabling full debug mode in production is risky due to sensitive information exposure. Use controlled, temporary verbose logging, error dashboards, and feature flags to collect needed data without compromising security.

Avoid full debug mode in production; opt for controlled logging.

Can a 500 affect SEO rankings?

Search engines typically treat transient 500 errors as server issues, which can temporarily affect user experience and crawl behavior. Fixing the error quickly and returning proper content helps minimize SEO impact.

Yes, but it’s usually temporary if resolved fast.

What should I log when a 500 occurs?

Log request IDs, timestamps, user context (anonymized), stack traces, and affected endpoints. Include database query details and external API responses when relevant to trace the fault.

Capture request IDs and stack traces to trace the fault.

When should I escalate to a senior engineer or DevOps?

Escalate when the root cause isn’t obvious after 30-60 minutes of diagnosis, when the service affects a wide user base, or when infrastructure issues are suspected. Prepare a concise incident brief before escalation.

If you’re stuck after an hour, bring in senior help.

Is it safe to restart the server to fix a 500?

A restart can clear transient issues, but it may not resolve the root cause. Use controlled restarts, monitor impact, and ensure there’s a rollback plan if the problem persists.

Restart with a plan and monitor closely.

Watch Video

Top Takeaways

- Identify root cause before changes

- Prioritize server-side logs and traces

- Use safe error pages for users during fixes

- Implement monitoring to catch repeats

- Review deployment practices to prevent recurrence